I pass on the following edited clips from ChatGPT and Claude condensations of a conversation between Nate Hagens and Iain McGilchrist. While I think McGilchrist's left-hemisphere/right hemisphere distinctions are a bit simplistic, the take home messages are appropriate regardless of the particular brain activity correlates that are invoked:

In episode 217 of Nate Hagens's The Great Simplification, Hagens is rejoined by philosopher and neuroscientist Iain McGilchrist — best known for The Master and His Emissary and The Matter With Things — for a wide-ranging conversation about what our current moment of civilizational crisis actually demands of us. The counterintuitive answer is not more urgency, not more optimization, but a deliberate slowing down and an opening toward spaciousness, silence, and wonder. The conversation begins from Hagens’ familiar “metacrisis” frame — ecological, economic, social, and psychological instabilities as interlocking expressions of a deeper human predicament — McGilchrist’s contribution is to shift the diagnosis inward, toward the style of attention that modern culture rewards.

Left-brain dominance and the obscuring of value

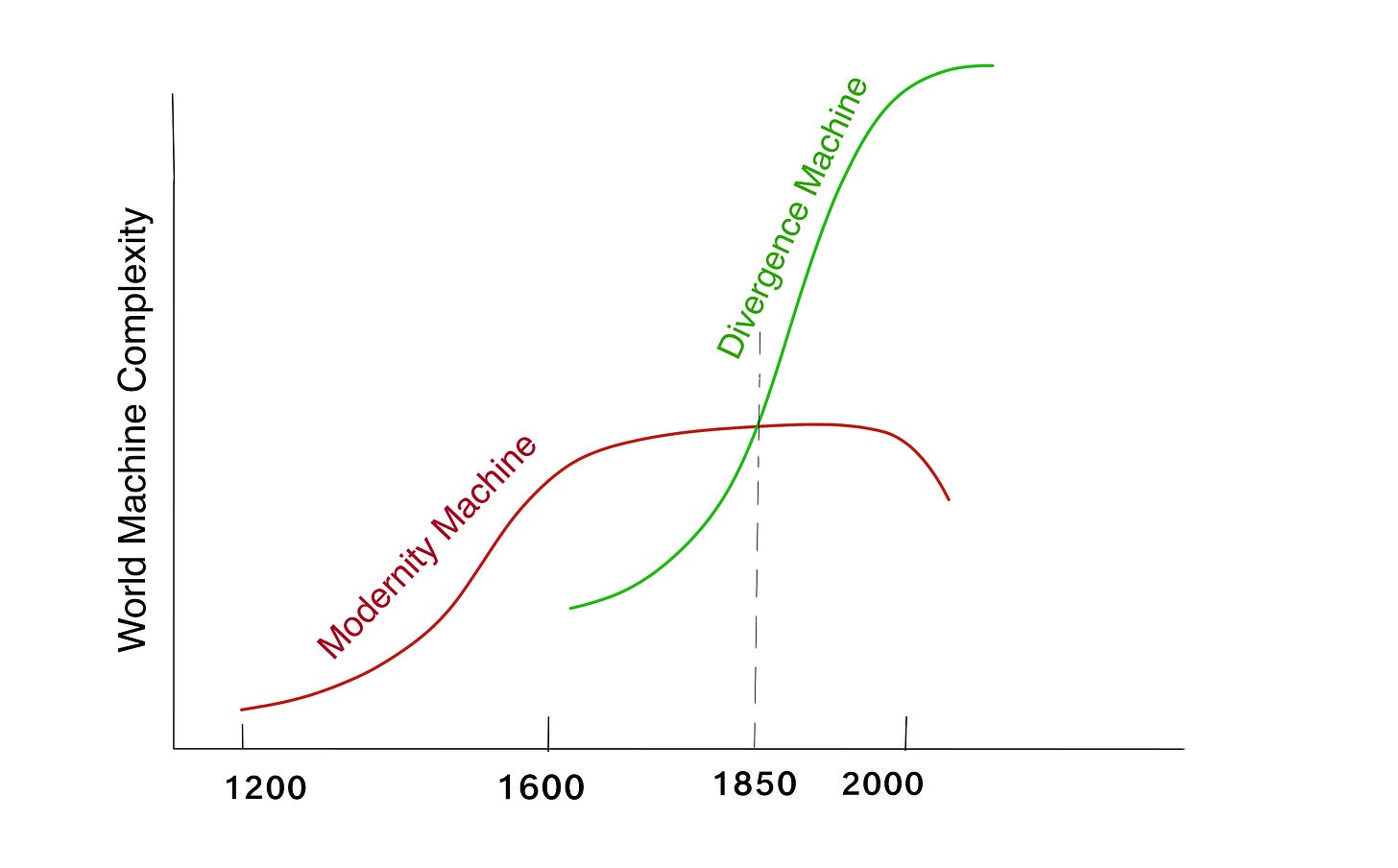

McGilchrist's central argument, developed across his major books, is that Western culture has become increasingly dominated by left-hemisphere modes of cognition — narrow, focused, categorical, explicit, and oriented toward control and manipulation. The right hemisphere, by contrast, attends to the whole, holds ambiguity, and remains open to what is living, relational, and interconnected. The trouble is not that we have a left hemisphere but that it has progressively usurped the role of the right as the governing perspective. This imbalance, McGilchrist argues, is not just a psychological curiosity: it is what underlies our collective inability to perceive and act on what truly matters — truth, goodness, and beauty. When the left hemisphere runs the show, these qualities become invisible, and what fills the vacuum is power, productivity, and the measurable.

Attention as the root of value

A thread running through the conversation is that attention is not neutral: what we attend to shapes what we value, and what we value shapes what we attend to. McGilchrist draws on the research insight that roughly 99% of brain activity is unconscious, which means that most of what guides our perception and behavior lies beneath deliberate awareness. The gorilla-in-the-room studies on inattentional blindness illustrate this starkly: we routinely fail to see what is right in front of us when our attention is narrowly locked. Applied to the metacrisis — the overlapping civilizational, ecological, and psychological crises of our era — this suggests that the problem is not primarily one of missing information or insufficient analysis. It is a crisis of attention itself. We are not seeing what matters because we have trained ourselves not to look.

The practice of spaciousness

The remedy McGilchrist proposes is not a political program or a technological fix. It is a shift in how we inhabit time. He urges listeners to resist what he calls the "mania for productivity" embedded in modern culture, and instead to cultivate spaciousness: pauses, silence, deep listening, and what Buddhism calls śūnyatā — emptiness not as absence but as fertile, generative openness. He cites Rabbi Abraham Joshua Heschel's writing on the Sabbath as a model: the ancient practice of building non-doing into the structure of the week was not a concession to weakness but a recognition that renewal and wisdom require fallow time. McGilchrist also points to the psychology of creativity research showing that insight characteristically emerges not during focused effort but in the hypnagogic spaces between — in showers, on walks, at the threshold of sleep.

Consciousness, panpsychism, and the thread connecting everything

The conversation moves into deeper philosophical territory when Hagens and McGilchrist explore what might underlie the universe's apparent orientation toward complexity, beauty, and relation. McGilchrist is sympathetic to panpsychism and panentheism — the view that some form of experience or proto-consciousness pervades reality, and that the universe is not a collection of inert objects but a relational, creative process. He draws on Whitehead's process philosophy, Bohm's implicate order, and Schelling's image of the self as a whirlpool — a temporary pattern that arises from and returns to a larger flow. Death, in this frame, is not mere termination but a kind of completion, and the obsession with indefinite life extension through technology reflects the left hemisphere's refusal to accept its own finitude.

A small percentage can shift the whole

McGilchrist closes with a message that is both modest and radical: he does not expect — or require — a majority conversion. Even a small percentage of people genuinely living differently, embodying humility, compassion, awe, and wonder, could begin to shift what he calls "cultural consciousness." This resonates with complexity theory's sensitivity to initial conditions and with the sociological literature on tipping points. The implication is that the most consequential thing an individual can do in a moment of civilizational turbulence may not be to do more, advocate louder, or optimize harder — but to slow down enough to actually see, and to live from that seeing.

For those already drawn to predictive processing frameworks: McGilchrist's account of hemispheric asymmetry maps interestingly onto the distinction between top-down, precision-weighted prediction (left-dominant) and the kind of open, receptive prior-updating that Friston's active inference would associate with high-entropy, exploratory sampling of the world. The right hemisphere, on this reading, is the brain's better Bayesian — the one willing to be surprised.

YouTube link to the full conversation